Tire and Defect Detection

As part of a SBIR grant to Diamond Age from the Dept of Transportation, I developed a prototype to detect tires and tire defects. The motivation was to provide truck drivers with automated tools to inspect the tires on their trucks and log information about any defects. A driver would walk around their vehicle, capturing views of the sidewall and tread on each tire. The system would then automatically detect defects and log that information to a database for each tire.

The first task was to detect and identify wheels from a walk-around video. To do this I used a neural net model to detect wheels in images. The model was the “Faster RCNN Resnet50” model from PyTorch. I trained this on a public dataset of truck wheel images, as well as a collection of my own images that I hand labeled. The video below shows the detections in a walk-around video of a jeep.

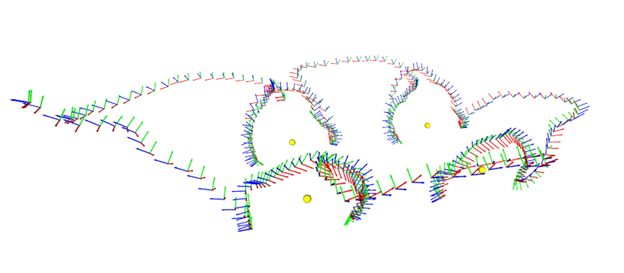

Next, I merge the detections from multiple images to find and label each wheel in 3D. To do this, I needed an estimate of the camera pose in each frame. I used the iPhone app called “CamTrackAR” which captures motion data along with the video data. Using this, I can reconstruct the camera trajectory and the position of each wheel in 3D. The wheels are then identified according to the order that they appear on each side of the vehicle. In the figure below, the yellow spheres represent the detected tire positions.

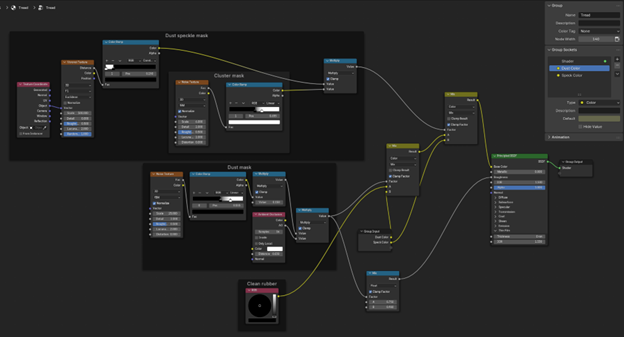

Finally, I detect tire defects in images of the tread. For this initial prototype, I focused on detecting tread defects such as low tread depth, center wear and edge wear. To avoid needing to collect a large dataset of real images of tire defects, I developed a Blender application that rendered synthetic images of tire treads (both good and defective). I randomly varied the tread pattern, lighting, material coloring, and texture. The Blender shader graph to create the tread material is shown below.

I created approximately 1000 images of tires. Example rendered synthetic images are shown below.

I trained a ResNet50 model to classify each image as “good” or “defective”. The results on a public dataset of tire tread images (“TyreNet”) were 91% accurate. I also applied the system to real images that we captured at a local truck transportation facility. Examples from this data collection are shown below.

The left image of each pair is the original captured image; the right image is a closeup of the tread area that I manually cropped (since the model expects a closeup image of the tread). The classification result is shown as a label on the right image.